Instead of manually checking cutoffs, we can create an ROC curve (receiver operating characteristic curve) which will sweep through all possible cutoffs, and plot the sensitivity and specificity.

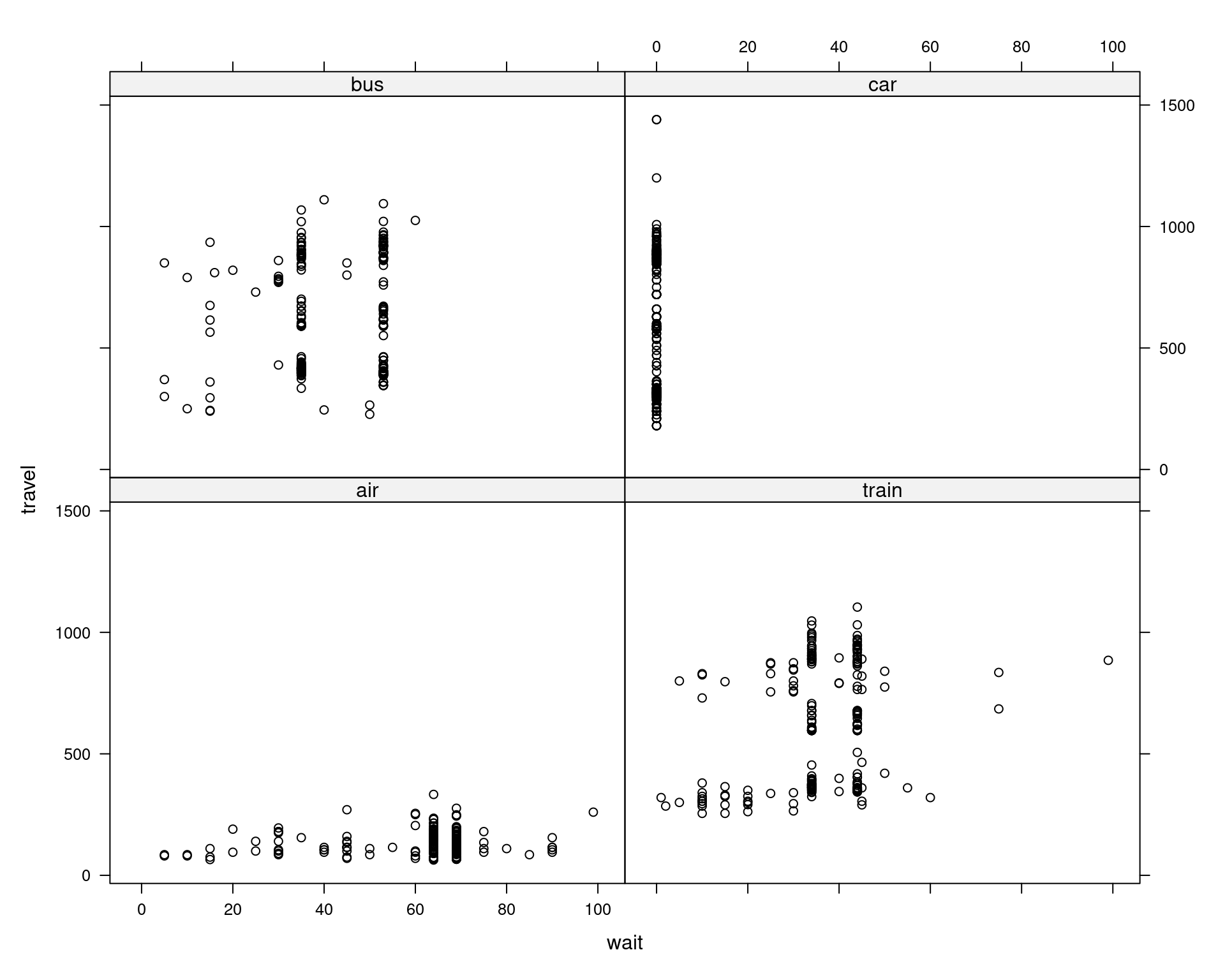

Note that usually the best accuracy will be seen near \(c = 0.50\). This is useful if we are more interested in a particular error, instead of giving them equal weight. Conversely, specificity increases as the cutoff increases. lets do that and then run a pairplot for a quick overview of any patterns. We see then sensitivity decreases as the cutoff is increased. Examples of typical situations that might be modeled by multinomial logistic. Examples: Consumers make a decision to buy or not to buy, a product may pass or fail quality control, there are good or poor credit risks, and employee may be promoted or not. Metrics = rbind( c(test_con_mat_ 10 $overall, test_con_mat_ 10 $b圜lass, test_con_mat_ 10 $b圜lass), c(test_con_mat_ 50 $overall, test_con_mat_ 50 $b圜lass, test_con_mat_ 50 $b圜lass), c(test_con_mat_ 90 $overall, test_con_mat_ 90 $b圜lass, test_con_mat_ 90 $b圜lass) ) rownames(metrics) = c( "c = 0.10", "c = 0.50", "c = 0.90") metrics # Accuracy Sensitivity Specificity For Binary logistic regression the number of dependent variables is two, whereas the number of dependent variables for multinomial logistic regression is more than two. The following is not run, but an alternative way to add the logistic curve to the plot. If I plot the same data with effects(), I do get the CIs. If f returns a single component, then this plots the surface defined by z f ( x, y) over the rectangular domain with x u and y v. Though ggeffects() should be compatible with multinom, the plot does not display confidence intervals. This model metric is used to evaluate how well a multinomial classification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed